ChatGPT vs. Qwen 2.5

Artificial intelligence tools continue to improve rapidly. You can select from a variety of models and platforms; however, in particular, ChatGPT by OpenAI and Qwen 2.5 by Alibaba Cloud are among the most discussed ones. Both have extensive features; each one represents trade-offs. The comprehension of the differences could help you figure out which is the most appropriate for your needs whether it is writing, coding, learning, or creating applications.

We conduct a comprehensive comparative study based on the following parameters: architecture, performance, features, user experience, pricing, pros and limitations, and use cases.

What Is Qwen 2.5?

Qwen 2.5 is much clearer if you look first at the technical reports and announcements that are public.

- Qwen 2.5 is a family of large language models (LLMs) developed by Alibaba’s Qwen team.

- To put it differently, this is open-source (or the majority of models are under permissive licenses like Apache 2.0) for massive parameter sizes (from as little as 0.5B to 72B) dependent on the model variant.

- One of the main features is a very long context window. A few versions can have 128,000 tokens of context and it implies that the model never forgets the earliest context when it deals with very long texts, dialogues or chains of reasoning.

- Some variations have multimodal features. For example, Qwen 2.5-VL (Vision-Language) not only can read but also can process images (if it is a document/image, etc.).

- Its data for training was almost infinitely large (about ~18 trillion tokens) and the instruction-following, structured output, etc. improved.

These were the reasons for Qwen 2.5 being one of the open LLMs on the same level as several closed ones in a couple of benchmark studies.

What Is ChatGPT?

Transformers

ChatGPT (Generative Pre-trained Transformer Chat) is an assistant created by OpenAI, which is based on a large language model. It is renowned for:

- Such convincing natural language comprehension and production abilities.

- A very stable ecosystem, tools, and uses that are all well implemented.

- On each of the various tasks like prompting, context management, coding, creative writing, summarization, translation, etc., ChatGPT gets good results.

- The development is a repeated process; the newer versions are generally more precise, can better grasp user instructions, and produce fewer unwanted outputs (hallucinations, off-topic drift, etc.).

Some parts of the code which are used to create ChatGPT are not open source; some of the models are proprietary. There are different versions, which are fine-tuned, the plugins integrations, and the distribution of features that are under control. Also, length limitations for context (which depend on the version and the plan) and performance changes with prompt complexity are some of the restrictions.

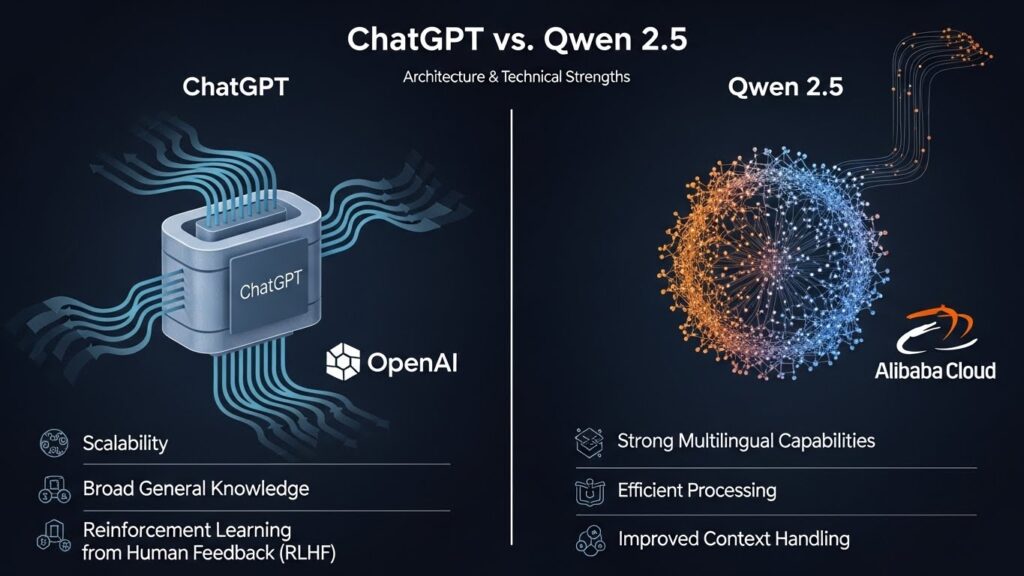

Architecture & Technical Strengths

Here are how the two compare on architecture and technical features:

| Feature | Qwen 2.5 | ChatGPT |

|---|---|---|

| Parameter Range / Model Sizes | Multiple sizes: from ~0.5B up to ~72B parameters. This allows choosing a model suitable for resource constraints or performance needs. | Also multiple versions, but many are closed or limited in access. Scaling to large model sizes, but not always with open weights. |

| Context Window (Length of Input It Can Handle Well) | Up to 128K tokens in certain variants. Very useful for long documents, codebases, or maintaining long dialogues. | Good context windows, but typically smaller than Qwen’s top models. Depends on version (e.g. ChatGPT-4 with web access, etc.). Might have shorter max context in many usage settings. |

| Multimodal Input / Vision + Language | Available in Qwen 2.5-VL models. These can process images, documents, screenshots, etc., and combine with language tasks. | ChatGPT in some versions supports images; newer versions may handle multimodal inputs depending on plan. Good at describing images, etc. But overall the availability might depend on subscription / platform. |

| Instruction-Following, Fine-Tuning | Strong improvements on instruction following; variants allow fine-tuning; various sizes suited for research, coding, structured outputs. | Also strong; many established pipelines, large community, extensive prompt engineering. Often has official fine-tuned variants and large support for instruction tuning. |

| Open Source / Licensing | Many models are open weights or permissively licensed (Apache 2.0 etc.), which helps with deployment, research, modification. | Mostly proprietary or partially open in parts; users of ChatGPT often do not have access to the underlying model weights. Access is via API / service. |

Performance & Benchmarks

- Qwen 2.5 has been tested via benchmarks for multilingual tasks, reasoning, code generation, vision-language tasks, etc. It is said that the performance of some variants like VL-32B exceeds that of comparable models of the same size in certain vision-language benchmarks.

- Referring to data from Alibaba, the benchmark data of Qwen 2.5-Max shows that this model achieves higher performance than the likes of DeepSeek V3, Llama 3.1 in numerous benchmark experiments.

- Typically, ChatGPT is good at general knowledge, conversation, coherence, correlation with human preferences, and smooth interaction. Though, as per the benchmarks, trade-offs are observable: reasoning skill is sometimes at its best for shorter contexts, in other cases, difficulties arise when a context is very long or if a specialized domain is required.

Usability & Ecosystem

These are some points on how they perform in real life, the tools they provide, ease of deployment,v.

Qwen 2.5:

- Due to most of the variants being open-source, users can launch smaller versions of the models locally or in places where there are not many resources.

- The long context window is beneficial for such tasks as summarizing long reports, interpreting legal documents, managing multiple document inputs.

- Multimodal models are also very effective in the above applications because they can read images, documents and combine them with the text.

- If we judge by the news, the model supports several languages (29+ according to the reports) which makes it useful for users of multilingual.

- Open source licensing gives more freedom in changing, creating new applications, using it offline, or in your own (depending on the variant) proprietary systems.

ChatGPT:

- A well-developed community of users with many software tools, stable infrastructure, and regular updates.

- Excellent UX, user interface, reliability, content quality, safety, and alignment.

- Users gain through experience, community, tutorials, etc. like many other users.

- In addition to the documentation that is usually superior, there are also more third-party plugins, third-party tools, etc.

- More established for a wider range of general users; thus, a longer trust period for fewer efforts to be put in order for it to become user-friendly.

Limitations & Trade-offs

No model is perfect. Each has constraints.

Where Qwen 2.5 may fall short:

- The hardware resources required for running very large models (like 72B) are quite significant; it might be an expensive way to deploy them.

- Even though it is open source and flexible, some variants may still be problematic in highly specific situations (e.g., hallucinations, performance drop in some niche domains) dependent on domain, prompt engineering, etc.

- Multimodal understanding is impressive but there are probably some limits with respect to perception tasks (image complexity, video, etc.) when compared to very specialized vision models.

- The tooling or the support for the Qweni (especially in non-Chinese markets) might not be as far along or as widespread as for ChatGPT for some users.

Where ChatGPT may fall short:

- The cost of using it could be especially high for premium versions or if there is a large number of API calls.

- Access limitations: certain functionalities (multimodal, large context, advanced capabilities) might be available only for specific subscription tiers.

- Usually, model weights are not accessible for users to inspect or run a local copy of (especially for large and recent versions).

- Context windows might be smaller, which could limit how well very long or complex content can be handled.

- Depend on the use of an external API or infrastructure; thus, there is less flexibility for offline use or if the data/privacy is heavily restricted.

Which Use-Cases Favor Which Model

Depending on what you want to do, one model may suit better than the other.

| Use Case | Likely Better Model | Why |

|---|---|---|

| Writing articles, blog posts, essays | ChatGPT (unless you specifically need open-source, very long context or offline) | ChatGPT’s polish, content generation, style, coherence, tooling excels. |

| Document summarization, legal or research reports with many pages | Qwen 2.5 (especially large context variants) | Because of long token window, ability to process large input. |

| Multimodal tasks (image+text, document scanning, vision tasks) | Qwen 2.5-VL models may have an advantage | Designed for vision-language tasks. |

| Custom deployment / offline usage / integrating into private systems | Qwen 2.5 (open source variants) | More flexibility, licensing allows more control. |

| Chatbots for general conversation, customer support, interactive conversation | ChatGPT might have better user experience, safety, smoother behavior. | |

| Coding help / programming tasks | Both are strong; depending on version Qwen Coder vs ChatGPT code-capable version; but ChatGPT has mature integration. |

Future & Trends

- Multimodal capabilities are the main trend, with the combination of text, image, audio, etc. Qwen 2.5 is an example of that trend.

- The size of context windows is one of the big challenges in AI development (greather coherency with more input). Qwen’s 128K context goes as far as to be next to the pioneer. Also, ChatGPT and others are working on that.

- Open vs proprietary: Due to the open-source nature of Qwen, there are more community contributions which may lead to faster iteration in some areas. However, users are more responsible for the fine-tuning, testing, and safety of the model.

- The competition in benchmarks and evaluations is more and more intense; we see Qwen 2.5 making claims to either outperform or match existing leading models in some areas.

Final Thoughts: ChatGPT vs. Qwen 2.5

Both ChatGPT and Qwen 2.5 are strong tools. The one that is more “better” is a choice depending mainly on your preferences:

- In case you are looking for a tool that is reliable and comes with a mature interface that is user-friendly and has a big eco-system, then ChatGPT would be a great choice.

- On the contrary, if your priorities are open weights, long document/ context support, multimodal inputs (images/documents), offline or custom deployment, then Qwen 2.5 would be more suitable.

Theoretically, employing both for different tasks may be most efficient: you can work with Qwen 2.5 for heavy document cases or areas where you need open source flexibility; ChatGPT can be used in situations where you require stylistic polish, creativity, or wide plugin support.

FAQs: ChatGPT vs. Qwen 2.5

1. Does Qwen 2.5 outperform ChatGPT in all tasks?

No. There are several areas where Qwen 2.5 outperforms (like long context or document-heavy tasks or vision-language tasks), and in particular benchmarks it’s very strong. However, in creative writing, dialogue polish, safety, and user experience, Qwen might be stronger. It’s just a question of very many different scenarios.

2. Can I use Qwen 2.5 offline or locally?

Yes, most of the Qwen 2.5 variants are open source, so you can run them locally if you have enough hardware. Smaller parameter models (0.5B, 1.5B, etc.) are more suitable for local use; the bigger ones require powerful GPUs or cloud infrastructure.

3. Are there any licensing restrictions for Qwen 2.5?

There are. Many variants have permissive licenses, but not all. Some larger models may have more restricted licenses or be for research only. So you’d better check the license of the specific Qwen model you want to work with.

4. What about safety, hallucinations, factual correctness?

Both ChatGPT and Qwen 2.5 can hallucinate (i.e., make up false info) – they’re not perfect. The degree of truthfulness is quite a bit about how you design the prompt, do instruction tuning, check the sources, and also which variant of the model you’re using. The more training and better fine-tuning a model has, the more correct it will be in factual tasks.

5. Which is more cost-efficient?

If you have a subscription or an API plan for ChatGPT, the cost is pretty predictable but it may be somewhat on the higher side. The cost can be significantly lowered by using Qwen 2.5 locally (if that’s an option) or smaller parameter models. But keep in mind: if you are working on the large variants with high token count or GPU-intensive tasks, then you are increasing the computational expense. So the cost efficiency is very much influenced by your usage pattern (size, context length, deployment environment).